All of these information measures were generated from quantum Rényi relative entropies by taking different limits.īS relative entropy can be seen as a fresh and forceful tool to resolve some specific challenges of quantum information-processing tasks. Apart from the above entropic measures derived from the quantum relative entropy, however, other useful entropy-like quantities have also been well studied recently, such as max-information, collision entropy, and min- and max-entropies. It is commonly known that von Neumann entropy, quantum conditional entropy, and quantum mutual information play vital roles in quantum information theory. Fang and Fawzi studied quantum channel capacities with respect to geometric Rényi relative entropy.

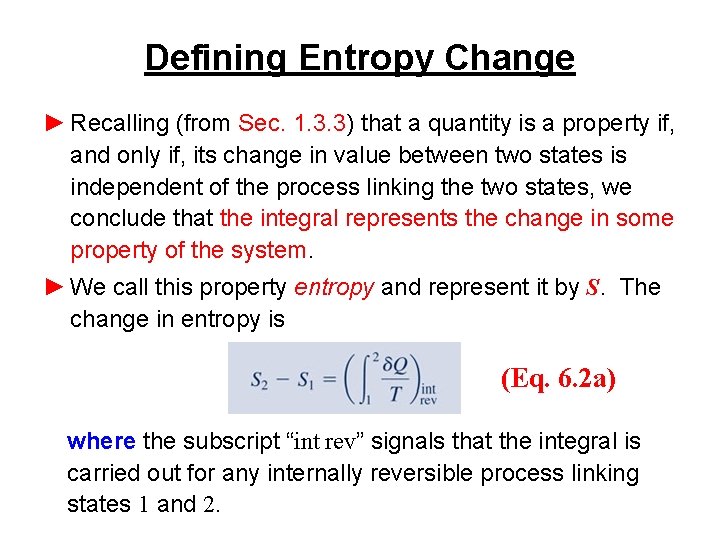

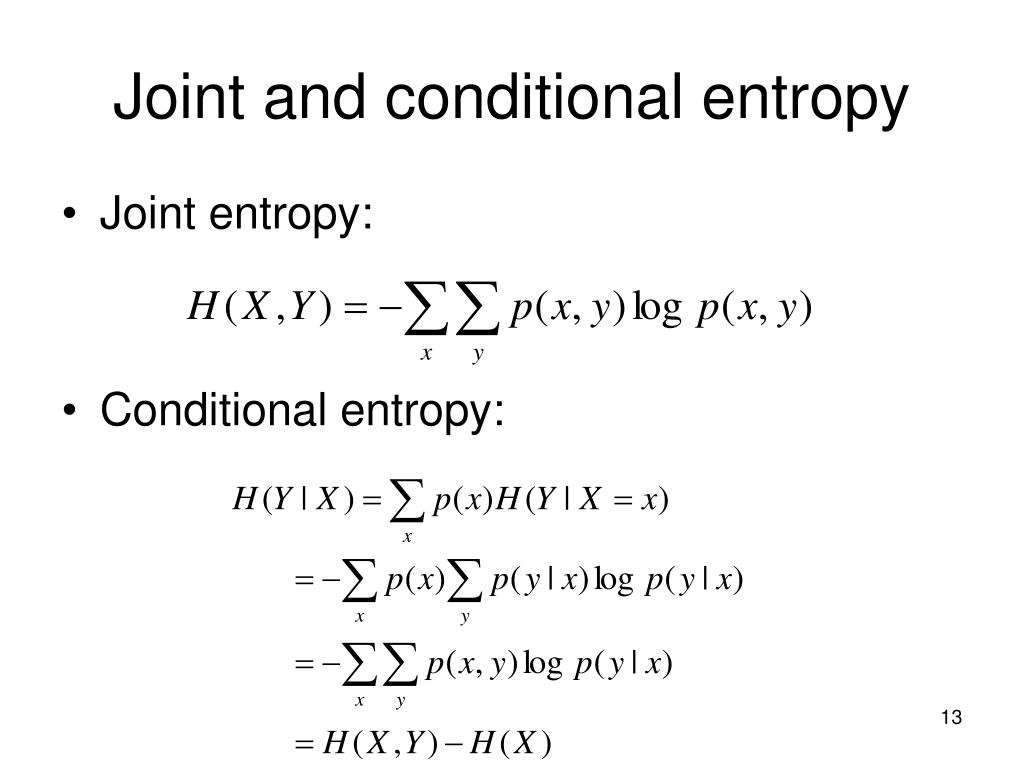

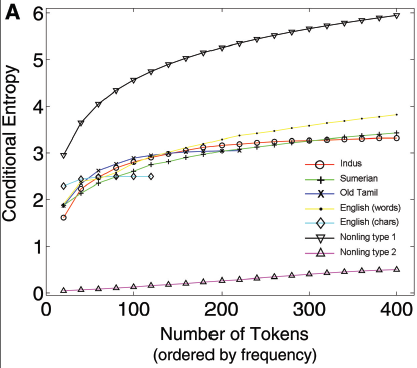

produced some weak quasi-factorization results for BS relative entropy. This property was first established by Hiai and Petz. More precisely, Katariya and Wilde employed BS relative entropy to discuss quantum channel estimation and discrimination, Bluhm and Capel contributed a strengthened data processing inequality for BS relative entropy. Additionally, BS relative entropy has recently attracted the attention of researchers. BS relative entropy is also an enticing and crucial entropy used to process quantum information tasks, which can be used to describe the effects of possible noncommutativity of the quantum states (the quantum relative entropy can not work well for this). Quantum relative entropy, a direct generalization of the classical relative entropy, has been studied extensively in recent decades. It is noteworthy that both the quantum and BS relative entropies are important variants of the classical relative entropy extension to quantum settings. However, the geometric Rényi relative entropy converges to the Belavkin–Staszewski (BS) relative entropy by taking the same limit. The fact is that quantum relative entropy, by taking the limit as α → 1, is a special case of the Petz-Rényi and sandwiched Rényi relative entropies. These quantities are very meaningful in different information-theoretic tasks, including source coding, hypothesis testing, state merging, and channel coding. Because of the non-commutativity of the quantum states, there are at least three different and special ways to generalize the classical Rényi relative entropy, such as Petz-Rényi relative entropy, sandwiched Rényi relative entropy and geometric Rényi relative entropy. The axiomatic approach introduced by Rényi can be readily generalized to quantum settings. It has operational meaning in information theoretical tasks and can be used to describe the level of closeness between two random variables.

Relative entropy (or Kullback–Leibler divergence ) is a special case of Rényi relative entropy, which is an important ingredient for a mathematical framework of information theory.

Rényi proposed an axiomatic approach to derive the Shannon entropy, and he found a family of entropies with parameter α ( α ∈.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed